The difficulty in managing a deep learning model is often deciphering why it behaves the way it does: be it xAI’s continuous attempts to refine Grok’s peculiar politics, ChatGPT’s issues with flattery, or common hallucinations, navigating through a neural network with billions of parameters is challenging.

Guide Labs, a startup based in San Francisco and led by CEO Julius Adebayo and chief science officer Aya Abdelsalam Ismail, is presenting a solution to this dilemma today. On Monday, the firm made public an 8-billion-parameter LLM, Steerling-8B, trained using a fresh architecture aimed at making its actions straightforwardly interpretable: Each token generated by the model can be traced back to its roots within the training data of the LLM.

This can range from simply identifying the reference materials for facts referenced by the model, to more intricate tasks such as grasping the model’s concept of humor or gender.

“If I possess a trillion ways to encode gender, and I utilize 1 billion of those trillion options, it’s essential to ensure that you discover all those 1 billion elements I’ve encoded, and then you need to be capable of turning them on or off reliably,” Adebayo conveyed to TechCrunch. “Current models can accomplish this, but it’s highly unstable… It’s essentially one of the ultimate questions.”

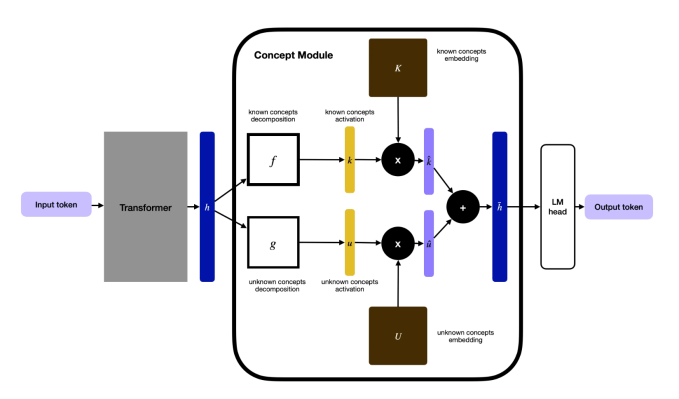

Adebayo commenced this research while pursuing his PhD at MIT, co-authoring a well-referenced paper in 2018 that demonstrated that existing techniques for comprehending deep learning models were unreliable. This endeavor eventually resulted in a novel method of constructing LLMs: Developers embed a concept layer within the model that categorizes data into traceable segments. Although this necessitates greater upfront data labeling, they leveraged other AI models to assist, allowing them to train this model as their most significant proof of concept thus far.

“The type of interpretability that individuals generally perform is… neuroscience on a model, and we turn that around,” Adebayo stated. “What we do is actually construct the model from the foundations up so that you don’t require neuroscience.”

One concern regarding this methodology is that it may remove some emergent behaviors that render LLMs fascinating: Their capability to generalize in innovative ways about concepts not previously encountered. Adebayo asserts that this still occurs in his company’s model: His team monitors what they term “discovered concepts” that the model unearthed autonomously, such as quantum computing.

Techcrunch event

Boston, MA

|

June 9, 2026

Adebayo contends that this interpretable architecture will be essential for everyone. For consumer-oriented LLMs, these strategies should empower model creators to undertake actions such as blocking copyrighted materials or improving output control concerning topics like violence or substance abuse. Regulated sectors will necessitate more manageable LLMs — for instance, in finance — where a model assessing loan candidates must focus on aspects like financial histories while disregarding race. Additionally, there is a demand for interpretability within scientific endeavors, another domain where Guide Labs has innovated technology. Protein folding has seen substantial success with deep learning models, yet researchers require deeper understanding regarding why their software identifies promising combinations.

“This model exemplifies that training interpretable models is no longer merely a scientific inquiry; it has now become an engineering challenge,” Adebayo remarked. “We have deciphered the science, and we can scale them. There’s no reason this kind of model shouldn’t achieve performance on par with frontier-level models,” which contain significantly more parameters.

Guide Labs states that Steerling-8B can attain 90% of the capabilities of current models while utilizing less training data, due to its innovative architecture. The next phase for the company, which originated from Y Combinator and gathered a $9 million seed funding from Initialized Capital in November 2024, is to construct a larger model and start providing users with API and agentic access.

“The current method of training models is quite primitive, thus making the democratization of inherent interpretability a long-term benefit for our role within humanity,” Adebayo conveyed to TechCrunch. “As we pursue models that will possess superintelligence, you don’t want an entity making choices on your behalf that remains somewhat enigmatic to you.”