Instagram is set to notify parents if their teenager frequently searches for terms associated with suicide or self-harm in a short timeframe, as announced by the company on Thursday. The alerts will be introduced in the upcoming weeks to parents who are utilizing parental supervision features on Instagram.

The social media platform, owned by Meta, states that although it already prevents users from searching for content related to suicide and self-harm, these new alerts are intended to ensure that parents are informed if their teen is persistently attempting to look up this content so they can provide the necessary support.

Search queries that could trigger an alert might consist of terms that promote suicide or self-harm, indicators suggesting a teen could be in danger of self-harm, and words like “suicide” or “self-harm.”

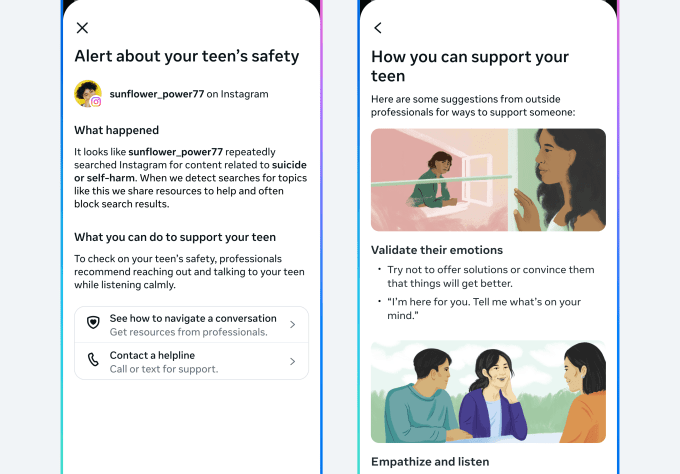

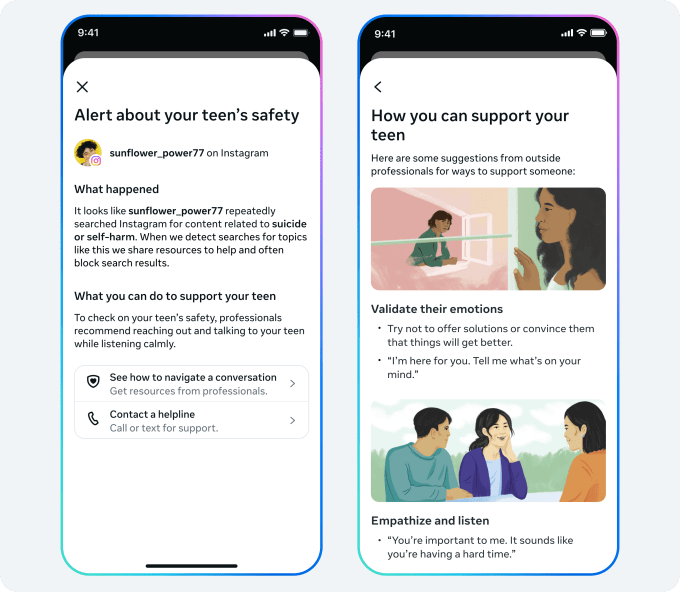

Instagram indicates that parents will receive these alerts via email, text, or WhatsApp, depending on the contact information they have provided, as well as through an in-app notification. This notification will include resources meant to assist parents in discussing these issues with their teens.

This initiative comes as Meta and other large tech firms are currently undergoing multiple lawsuits aimed at holding social media companies accountable for the damage inflicted on teenagers.

During a trial in the U.S. District Court in the Northern District of California, Instagram’s head Adam Mosseri faced intense questioning from prosecutors regarding the app’s slow implementation of essential safety features, including a nudity filter for direct messages sent to teens.

Moreover, in a different lawsuit proceeding before the Los Angeles County Superior Court, it surfaced that an internal study conducted by Meta revealed that the influence of parental supervision and controls on children’s compulsive use of social media was minimal. The research also indicated that children encountering stressful life situations were more prone to difficulties in managing their social media usage effectively.

Considering the ongoing lawsuits alleging that the platform has not sufficiently safeguarded teens, the introduction of these alerts is not particularly unexpected.

The organization emphasizes that it will strive to limit the issuance of these notifications to prevent unnecessary alarm, as excessive notifications could diminish their overall impact.

“In our efforts to find the right balance, we analyzed Instagram search patterns and consulted with experts from our Suicide and Self-Harm Advisory Group,” Instagram detailed in a blog post. “We established a threshold that calls for multiple searches in a brief period, while still prioritizing caution. Although this entails that we might occasionally inform parents even when there isn’t a significant concern, we believe — and experts concur — that this serves as an appropriate initial step, and we will keep monitoring and gathering feedback to ensure we stay on the right course.”

The alerts will be available in the U.S., U.K., Australia, and Canada starting next week, with plans to expand to additional regions later this year.

In the future, Instagram intends to activate these notifications when a teen attempts to use the app’s AI for discussions surrounding suicide or self-harm.