Soon after Amazon CEO Andy Jassy unveiled AWS’s historic $50 billion investment agreement with OpenAI, Amazon extended an invitation for a private tour of the chip development facility central to the deal, primarily at its own cost.

Industry professionals are closely monitoring Amazon’s Trainium chip, developed at that site, for its potential impact on reducing AI inference costs and possibly challenging Nvidia’s near monopoly.

Intrigued, I decided to accept the invitation.

The day’s tour guides included the lab’s director, Kristopher King (shown below right), director of engineering Mark Carroll (below left), along with the team’s PR representative who coordinated the visit, Doron Aronson (featured with me later in the article).

AWS has been the primary cloud platform for Anthropic since the AI lab’s inception — a partnership strong enough to endure Anthropic’s later addition of Microsoft as a cloud partner, alongside Amazon’s expanding collaboration with OpenAI.

The OpenAI partnership designates AWS as the exclusive source for the model creator’s new AI agent builder, Frontier, which may play a significant role in OpenAI’s operations if agents become as impactful as Silicon Valley anticipates. It remains to be seen if this exclusivity holds true as announced. The Financial Times reported this week that Microsoft could view OpenAI’s agreement with Amazon as conflicting with its own deal with OpenAI, which includes access to all of OpenAI’s models and technology.

What makes AWS so attractive to OpenAI? As part of this agreement, the cloud giant has pledged to provide OpenAI with 2 gigawatts of Trainium computing power. This represents a substantial commitment, considering that Anthropic and Amazon’s Bedrock service are already utilizing Trainium chips faster than Amazon can manufacture them.

Techcrunch event

San Francisco, CA

|

October 13-15, 2026

There are currently 1.4 million Trainium chips across all three generations, with Anthropic’s Claude utilizing over 1 million of the Trainium2 chips deployed, according to the company.

It’s important to mention that while Trainium was initially designed for quicker, cost-effective model training (a higher priority a couple of years back), it is now also optimized and utilized for inference. Inference — the act of executing an AI model to generate outputs — is presently the largest performance bottleneck in the sector.

For instance, Trainium2 manages the bulk of the inference load on Amazon’s Bedrock service, which facilitates the development of AI applications by Amazon’s numerous enterprise clients and permits the applications to leverage multiple models.

“Our customer base is expanding as quickly as we can deploy capacity,” King remarked. “Bedrock could grow to rival EC2 someday,” he said, referencing AWS’s massive compute cloud service.

Trainium versus Nvidia

In addition to presenting a viable alternative to Nvidia’s backlog of hard-to-get GPUs, Amazon claims its newly developed chips running on the latest Trn3 UltraServers can cost up to 50% less to operate for similar performance when compared to conventional cloud servers.

Alongside the Trainium3, introduced in December, this AWS team has also developed new Neuron switches, and Carroll asserts that this combination is revolutionary.

“What that gives us is something substantial,” Carroll stated. The switches enable every Trainium3 chip to communicate with all other chips in a mesh configuration, lowering latency. “That’s why Trainium3 is setting numerous records,” especially in terms of “price per power,” he explained.

When dealing with trillions of tokens daily, such advancements accumulate.

Indeed, Amazon’s chip team received accolades from Apple in 2024. In a rare instance of transparency for the typically secretive company, Apple’s AI director openly highlighted how they utilized another of the team’s chips — Graviton, a low-power, ARM-based server CPU and the first notable chip designed by this group. Apple also commended Inferentia — a chip explicitly crafted for inference — and acknowledged Trainium, which was relatively new at that moment.

These chips embody Amazon’s traditional strategy: Identify consumer needs, then create an in-house alternative to compete on price.

Historically, a stumbling block for chips has been the switching costs. Applications designed for Nvidia’s chips require re-architecting to function with others — a lengthy process that dissuades developers from switching.

However, the AWS chip team proudly informed me that Trainium now supports PyTorch, a widely used open-source framework for crafting AI models. This encompasses many models available on Hugging Face, a vast repository where developers share open-source models.

The transition, Carroll mentioned, entails “essentially a one-line alteration, followed by recompiling, and then executing on Trainium.” In other words, Amazon aims to chip away at Nvidia’s market hegemony wherever feasible.

Additionally, AWS recently announced a partnership with Cerebras Systems, incorporating that company’s inference chip on servers equipped with Trainium, promising what Amazon asserts will be enhanced, low-latency AI performance.

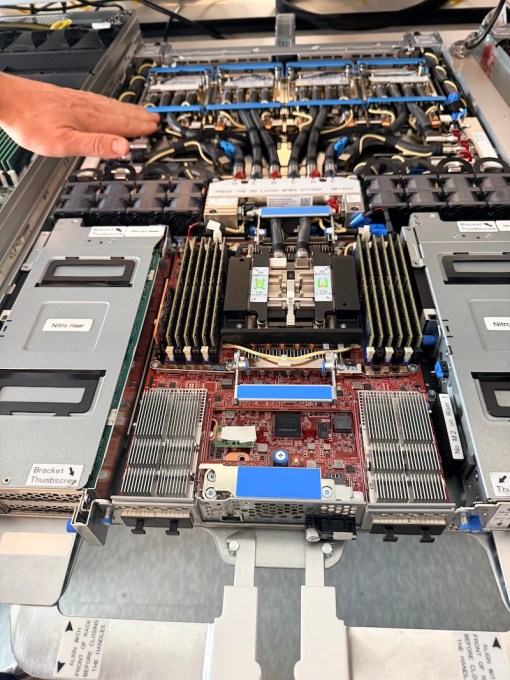

But Amazon’s aspirations extend beyond the chips themselves. It also engineers the servers that house the chips. Beyond the networking elements, this team has developed “Nitro,” a hardware-software combination that delivers virtualization technology (allowing multiple software instances to operate independently on the same server); cutting-edge liquid cooling technology; and the server sleds (depicted below) that accommodate this equipment.

All of this is to manage cost and performance.

Operating 24/7 on the “bring-up”

Amazon’s custom chip-designing division originated when the cloud giant acquired Israeli chip designer Annapurna Labs in January 2015 for approximately $350 million. Thus, this group has now been engaged in chip design for AWS for more than a decade. The division has preserved its Annapurna heritage and name — its logo is prominently displayed throughout the office.

Situated in a sleek, chrome-windowed building in the upscale “The Domain” area of Austin, the chip lab resides in a pedestrian-friendly zone filled with shopping and dining options, often referred to as Austin’s Silicon Valley.

The office features the quintessential tech corporate ambience: cubicle desks, communal areas, and meeting rooms. However, at the back of a high floor in the building lies the actual lab, providing expansive views of the city.

The lab itself, packed with shelving and roughly the size of two large conference rooms, is a bustling industrial environment, largely due to the sound of equipped fans. It resembles a blend between a high school workshop and an upscale lab setting from Hollywood, albeit with engineers dressed in casual attire rather than white lab coats.

It’s important to clarify that this is not where the chips are physically manufactured, so no white hazmat suits were required. The Trainium3 is a cutting-edge 3-nanometer chip, fabricated by TSMC, arguably the foremost entity in 3-nanometer production, with additional chips produced by Marvell.

However, this is the location where the “bring-up” magic occurs.

“A silicon bring-up is when you receive the chip for the first time, and it’s like a grand overnight celebration. You stick around, almost like a lock-in,” King describes. After 18 months of development, the chip is activated for the initial verification of its functionality as designed. The team even captured some footage of the Trainium3 bring-up and shared it on YouTube.

Spoiler alert: It’s never without issues.

For Trainium3, the prototype chip was initially designed for air cooling, similar to previous iterations. The current model, however, employs liquid cooling, which provides energy advantages and represents a substantial engineering achievement.

During the bring-up phase, the specifications for how the chip attached to the air-cooling heat sink were misaligned, preventing the chip from being activated.

Undeterred, the team “immediately retrieved a grinder and began grinding off the metal,” King recounted. To maintain the festive bring-up pizza party atmosphere, they discreetly took the grinding to a conference room.

Working through the night to resolve technical challenges “is what silicon bring-up is all about,” King stated.

The lab even features a welding station, where hardware lab engineer and master welder Isaac Guevara showcased welding small integrated circuit components through a microscope. This is such incredibly challenging work that senior leader Carroll candidly admitted he couldn’t do it, to the laughter of Guevara and the other engineers present.

The lab is equipped with both custom-designed and commercially available tools for testing and diagnosing chip issues. Here’s signal engineer Arvind Srinivasan showing how the lab tests each minuscule component on the chip:

Sleds are the main highlight of the lab

However, the standout feature of the lab is an entire row displaying each version of the “sleds” the team has engineered.

Sleds are the trays that accommodate the Trainium AI chips, Graviton CPU chips, along with supporting boards and components. When stacked on a rack with the networking component, which is also custom-designed by this team, you get the systems that form the core of Anthropic Claude’s success.

Here’s the sled that was highlighted during the AWS re:Invent conference in December:

Validated by Anthropic and OpenAI

I anticipated my guides to boast about the OpenAI agreement throughout the tour. However, they refrained from doing so.

Their reluctance may have stemmed from the aforementioned legal uncertainties potentially surrounding the deal. Yet, the impression I gathered was that these hands-on engineers (currently working on the next version, Trainium4) have had limited opportunities to collaborate with OpenAI thus far. Their day-to-day focus has primarily been on Anthropic’s and Amazon’s requirements.

At present, a significant proportion of Trainium2 chips is deployed in Project Rainier — among the largest AI compute clusters worldwide — which became operational in late 2025 with 500,000 chips. It is utilized by Anthropic.

Nevertheless, there was a wall-mounted monitor in the main office displaying a quote about how OpenAI plans to utilize Trainium. The pride was palpable, albeit subtle.

Alongside this lab, the team also operates its own private data center for quality assurance and testing purposes. Located a short drive away, it does not host customer workloads and is situated at a co-location facility, not an AWS data center.

Security protocols are stringent: There are specific measures required to enter the building and access Amazon’s designated area within it.

The cooling system in the data center is so loud that earplugs are required, and the air is thick with the acrid scent of heated metal. It’s not a conducive environment for an average person to linger in.

In this data center, rows upon rows of servers house sleds that integrate all of Amazon’s latest custom chips: Graviton CPU, liquid-cooled Trainium3, and Amazon Nitro, all efficiently computing. The liquid runs in a closed-loop system, meaning it is recycled, which should also mitigate environmental impacts, according to the engineers.

Here’s what a current Trn3 UltraServer looks like: Multiple sleds are stacked above and below, with the Neuron switches positioned centrally. Hardware development engineer David Martinez-Darrow is depicted here performing maintenance on a sled:

While the focus on the team has always been high, scrutiny has intensified lately.

Amazon CEO Andy Jassy closely monitors this lab, proudly boasting about its offerings like a proud parent. In December, he stated that Trainium had already become a multibillion-dollar venture for AWS, labeling it one of the pieces of AWS technology he is most thrilled about. He even highlighted the chip during the announcement of the OpenAI partnership.

The team feels the pressure as well. Engineers will work around the clock for three to four weeks surrounding each bring-up event to resolve any problems ensuring the chips can be mass-produced and integrated into data centers.

“It’s crucial that we expedite the process to confirm its operational success,” Carroll noted. “Thus far, we’ve been performing exceptionally well.”

*Disclosure: Amazon covered the airfare and the cost of one night’s stay at a local hotel. True to its Leadership Principle of Frugality, this was a back-of-the-plane middle seat and a modest room. TechCrunch financed the remaining associated travel expenditures such as Ubers and baggage fees. (Yes, I checked a bag for an overnight trip. I can be a bit high maintenance.)