Mercedes unveils steer-by-wire technology in the 2026 EQS, substituting mechanical steering with electronic control systems and allowing for a novel driving and interior experience.

PS6 may be nearer than you realize, and it isn’t arriving by itself.

Recent leaks indicate that the development of the PS6 is in progress, along with a possible PlayStation handheld device, and pricing that is budget-friendly for gamers.

Claude has just closed the door on OpenClaw (unless you increase your payment)

Anthropic has introduced additional fees for utilizing Claude with OpenClaw, shifting third-party access to a pay-as-you-go model and igniting backlash from power users.

Aiper IrriSense 2 Smart Irrigation System Evaluation: Clever yet Unreliable

To utilize the Area mode, establish the region’s limits through the app, akin to other devices. Enable mapping mode, and the sprinkler will begin. Modify the water pressure to the level you prefer, targeting the edge of the yard but avoiding the fence, then drop a pin to establish the perimeter. Slightly twist the sprinkler nozzle and repeat, fine-tuning the flow to cover the intended area. Proceed until the full 360 degrees are completed, dropping pins to outline the entire yard. The system can accommodate up to 4,800 square feet, achieving 39 feet with the spray.

Inside the app, witness the map developing in real time. The task is straightforward, except for the final few points, where closing the 360-degree loop may pose challenges. The finished map might display a minor unclosed segment.

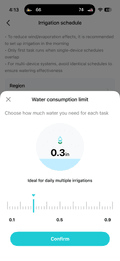

Watering can commence on demand or be set up on a schedule, with a “water consumption limit” dictating the volume of water, in inches, that is administered. Although exact precision is difficult to measure, the estimates appear plausible.

In Area mode, the IrriSense 2 disperses water in a singular direction, rotating clockwise through 360 degrees, then counter-clockwise, until the desired irrigation depth is attained.

The spray system of the IrriSense 2, described as a soft mist, operates more like a jet, particularly when reaching the yard’s far sides. This results in more water being distributed at the edges of the yard than at the center, a characteristic typical of rotary sprinklers. The system adjusts the pressure with each rotation, gradually decreasing it until the final sprays only extend a few inches from the unit. If a run is canceled prematurely, only the outer edges of the area will receive water.

Apple removed this AI application… and now it’s unexpectedly returned

Apple has restored the Anything AI app after it was taken down due to policy breaches, in response to public outcry and a smart iMessage workaround created by developers.

Is Dunesday no longer alive? Could a fresh release date genuinely rescue Avengers: Doomsday or Dune: Part Three?

Avengers: Doomsday might receive a fresh release date, but will that rescue the movie and Dune: Part Three from Dunesday this December?

Microsoft challenges Google and OpenAI with its proprietary AI models

From capturing boardroom discussions to duplicating voices in an instant, Microsoft’s MAI model trio has arrived, and its pricing is designed to pressure competitors.

Your Galaxy S26 FE may feature a previous-generation chip, and initial benchmarks have already indicated the difference.

Samsung’s affordable Galaxy S26 FE has shown up early on Geekbench, and its results validate that the Fan Edition approach, featuring a newer device with an older processor, is still going strong.

First smartphone featuring color e-ink and LCD has just made an appearance

A new mobile phone integrates a color e-ink display alongside an LCD screen, providing enhanced battery longevity and eye-comfortable functionality for reading and daily activities.

The Galaxy S26 Ultra is an eye-catcher, yet its two-year-old counterpart is still performing excellently for me.

I’m fine with my current situation for the time being.