At a military installation in central California, four-seat all-terrain vehicles traverse hillside paths. This is a training drill, but not for the operators in the vehicles: It is an initiative aimed at preparing AI models for active conflict environments.

The self-driving military ATVs are managed by Scout AI, a startup established in 2024 by Coby Adcock and Collin Otis, which refers to itself as a “frontier lab for defense.” The company announced on Wednesday that it has secured a $100 million Series A funding round, spearheaded by Align Ventures and Draper Associates, building on its $15 million seed funding round from January 2025.

Scout extended an exclusive invitation to TechCrunch for a tour of its training activities at a military base whose name the company requested not be disclosed.

The firm is developing an AI model named “Fury” to manage and operate military resources, beginning with logistical tasks but progressing towards autonomous weaponry. CTO Collin Otis likens this endeavour, which enhances existing large language models (LLMs), to soldier training.

“They begin their training at 18, and some even after completing college, so it’s beneficial to start with that foundational level of intelligence,” Otis explained to TechCrunch. “It’s advantageous to begin with someone who has already invested and then ask, how do I teach this system to become a remarkable military AGI, rather than just a broadly intelligent AGI?”

Scout has obtained military technology development agreements amounting to $11 million from entities such as DARPA, the Army Applications Laboratory, and other Department of Defense clients. It is among 20 autonomy firms whose technology is currently in use by the US Army’s 1st Cavalry Division during its routine training schedule at Ft. Hood in Texas, with the anticipation that this unit will deploy effective products in 2027.

During Scout’s internal evaluations, the rubber meets the dirt on the base’s rugged terrain. There, the operations team, comprising former soldiers, is testing the vehicles through simulated missions.

Techcrunch event

San Francisco, CA

|

October 13-15, 2026

While self-driving cars are becoming more visible in various cities worldwide, they operate in more regulated environments. However, functioning autonomously on unmarked paths or in off-road conditions presents a completely different challenge. Otis, a former executive at the autonomous trucking firm Kodiak, mentioned that he was inspired to launch Scout after realizing the system he contributed to at Kodiak wasn’t sufficiently intelligent for unpredictable combat scenarios.

A fresh perspective on autonomy

Scout is looking towards a newer form of autonomy technology: Vision Language Action models, or VLAs, which are based on LLMs and utilized for controlling robots. First introduced by Google DeepMind in 2023, this technology inspired robotics startups such as Physical Intelligence and Figure.AI, the humanoid robot business led by Adcock’s sibling, Brett.

Adcock serves on Figure’s board. He asserts that this experience has solidified his belief in the potential to enhance the military’s expanding fleet of autonomous vehicles with broader intelligence. His brother connected him with Otis, who was consulting for Figure, and they commenced the application of cutting-edge AI to military solutions.

“If I handed you a drone controller right now and fitted you with a headset, you could master flying it in mere minutes,” Otis remarked. “You’re simply learning to relate your existing knowledge to these couple of joysticks. It’s not a significant leap. That’s how to conceptualize VLAs and why they represent such a breakthrough.”

Indeed, I had the opportunity to drive one of Scout’s ATVs along the rugged trails, and the terrain was demanding: steep inclines, loose sand on turns, vanishing paths, and perplexing intersections. I’m not well-versed in ATV driving but managed reasonably well on my first try (if I may say so). This general intelligence is what the company aims to replicate in its models, which have been trained with these ATVs for merely six weeks after employing civilian ATVs to kickstart the procedure.

I also experienced a ride in the ATV under autonomous control, where I sensed a notable difference — it accelerated more rapidly than a human mindful of passenger comfort. The operations crew highlighted how the vehicles navigate to the right on broader paths while centering themselves on narrow ones, akin to their training operators. They also slow down unexpectedly when uncertain, pausing to consider their next move, something that occurred several times as we traveled a 6.5 km loop before returning to base.

Although VLAs are sufficiently novel that they have yet to be utilized operationally by any firm, “the technology is advanced enough to permit this experimentation in the field with soldiers to ascertain the most effective practices for US forces,” stated Stuart Young, a former DARPA program manager who worked on ground vehicle autonomy. Like other autonomy firms, Scout’s complete autonomy stack also encompasses deterministic systems and various AI paradigms to enhance its agents’ capabilities.

Young departed from DARPA this month to join Field after overseeing a project named RACER. This initiative urged companies to design high-speed, self-driving off-road vehicles, contributing to the growth of this sector similarly to how the organization’s Grand Challenge propelled self-driving cars forward. Two rivals in this field, Field AI and Overland AI, were established from that program, with Scout joining later.

The initial uses of ground autonomy, as conveyed by Scout executives and military technology experts, will involve automated resupply: transporting water or ammunition to remote observation posts, or in a convoy where a crewed vehicle might be trailed by six to ten autonomous units, reserving valuable human effort for more vital assignments. Brian Mathwich, an active-duty infantry officer currently serving as a military fellow at Scout, recounted a recent exercise in Alaska where he led a resupply convoy in complete darkness and wished for autonomous vehicles to assist him.

Enhancing the Army’s vehicle capabilities

Scout considers itself primarily a software enterprise, creating an intelligence framework for military machinery. It does not plan to manufacture the autonomous vehicles but intends to build upon them.

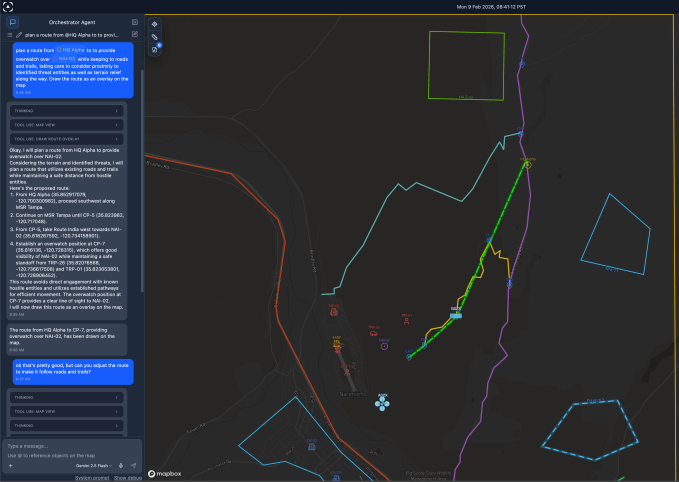

Adcock foresees that the startup’s initial widely accepted product will be termed “Ox,” the firm’s command and control software, packaged with robust computer hardware (GPUs, communications, cameras). This software is designed to empower individual soldiers to coordinate multiple drones and autonomous ground vehicles via command-like prompts: “Proceed to this waypoint and monitor for enemy forces.”

However, the functionality of this software necessitates training on actual vehicles. Thus, Foundry is the name given to the company’s training area at the military base. There, drivers endure eight-hour shifts navigating the ATVs and subsequently engage in a reinforcement learning system to record instances where they had to intervene, which is then utilized to refine the model. The base commander has requested the company’s ATVs participate in security patrols.

One hypothesis Scout is investigating is that VLAs will leverage this relatively constrained data set, alongside simulation training data, to develop a fully capable driving agent. While the vehicle appears adept on trails, it is not yet ready for complete off-road functionality.

Scout is also experimenting with drones for reconnaissance and as potential weapons, enhancing them with intelligence through vision language models, a multi-modal variant of LLM.

Scout is developing a system in which groups of munition drones operate in conjunction with a larger “quarterback” platform that provides additional computational power to direct them. In one mission, the drones would canvass a specified area for concealed enemy tanks and engage them, potentially without human guidance. Otis asserts that the alternative method in such instances could involve indirect artillery strikes, which tend to be less precise than drone assaults.

Although autonomous weaponry is a contentious issue in defense technology politics, experts point out that the concept is not new: Heat-seeking missiles and landmines have been utilized for many years. The primary concern for technologists is the management of these weapons, according to Jay Adams, a retired U.S. Army Captain leading Scout’s operations team, who shared his insights with TechCrunch.

He emphasizes that Scout’s munition drones can be programmed to target only specified threats within a designated geographic area, or to require human confirmation before acting. He also remarks that autonomous weapon platforms are unlikely to discharge their firepower out of fear, unlike an eighteen-year-old soldier might.

VLAs, furthermore, promise improved targeting. Scout asserts that its models are pre-trained on a unique set of military data to prepare them for situations, such as encountering an enemy tank during a resupply operation. Lt. Col Nick Rinaldi, who oversees Scout’s initiatives for the Army Applications Laboratory, mentions that while automated targeting is challenging and is unlikely to be implemented outside of controlled environments soon, the VLAs’ potential to assess threats signifies a technology worth exploring.

Adams believes the capability for drones to autonomously identify their own targets is crucial for the future of warfare: Despite the heightened interest in drone warfare spurred by Russia’s invasion of Ukraine, he believes that having humans in control of individual UAVs doesn’t scale sufficiently for the US to contend with vast numbers of low-cost unmanned systems should they pose threats to US forces.

A mission to address anti-military sentiments

Similar to many defense startups, Scout is unapologetic about its mission and its leaders openly criticize firms that hesitate to share their technology with the government. For example, Google is said to have withdrawn from a Pentagon contest aimed at developing control systems for autonomous drone swarms, a capability that Scout is also pursuing.

“The AI community is hesitant to collaborate with the military,” Otis stated, referring to Anthropic’s disagreements with the Pentagon regarding its service terms. “None are willing to deploy agents on one-way attack drones or utilize agents for missile systems.”

Nonetheless, Scout is utilizing existing LLMs as the foundation to create its agents, although it chose not to disclose specific details. Otis mentioned that the company has partnerships with “well-known hyperscalers” to provide the pre-trained intelligence for its foundational model. He also refrained from commenting on whether it uses open-weight models, such as those offered by Chinese companies. Numerous firms relying on AI inference utilize these models to function at a lower cost compared to those from leading labs like Anthropic or OpenAI.

Scout plans to tackle this by developing its own model from the ground up in the coming years, with the founders indicating that a significant portion of its capital will be directed towards these training and computational expenses. Indeed, Otis speculates that Scout might surpass existing front-runners to AGI because its model will continually engage with the real world.

“There’s a perspective within the AGI community that you can’t achieve extensive intelligence solely by consuming information from the internet, and that most intelligence derives from real-world interactions,” Otis stated.

Does this imply Adcock is in competition with his brother’s humanoid robots at Figure? No, Otis asserts, but “we can achieve scale much more rapidly because our client has resources,” he remarked, referencing the Pentagon.

When you purchase through links in our articles, we may earn a small commission. This doesn’t affect our editorial independence.