Google unveiled several new AI functionalities under the Gemini Intelligence brand during its Android Show: I/O Edition event on Tuesday. Among these enhancements are the capabilities for AI to perform tasks across different applications, navigate the web, fill in forms, articulate speech, and even enable you to vibe-code customized Android widgets.

Gemini becomes more robust

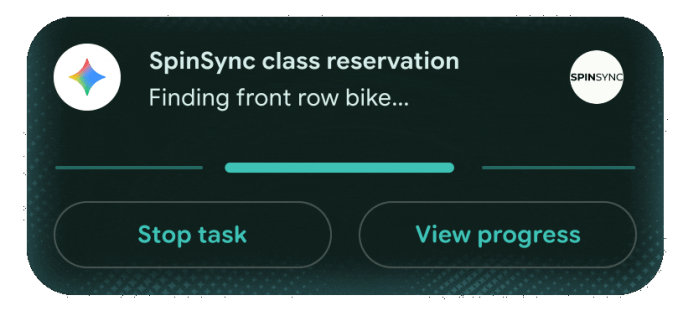

Earlier this year, at the Samsung Galaxy S26 launch, the company introduced some agentic abilities like ordering food or securing a ride for Gemini. During that event, Google revealed that Gemini would soon gain the ability to carry out more intricate tasks, such as reserving a front-row bike for a spin class, retrieving a class syllabus from Gmail, and searching for books relevant to that subject.

Now, Google’s AI assistant will handle a multi-step process, such as copying a grocery list from your notes app and adding items to your shopping app’s cart. To access this function, you’ll press the power button on your phone and state the task. Meanwhile, the content displayed on the phone’s screen provides context for the assistant. Google emphasized that Gemini will wait for your final approval before completing the checkout.

Loading the player…

Moreover, a feature introduced in January allowed Gemini to browse the web and complete tasks such as scheduling appointments, as part of an experimental rollout. Today, Google announced that this auto-browse functionality will also be available on Android.

By late June, Android devices will see the addition of Gemini in Chrome, an AI feature designed to assist users in summarizing content or posing questions about web page materials, similar to the existing functionality of Gemini in Chrome on desktops.

Additionally, Gemini will be able to fill out forms on your behalf by acquiring personal information through Personal Intelligence. (Google mentioned that this feature is opt-in and can be disabled through settings at any time.)

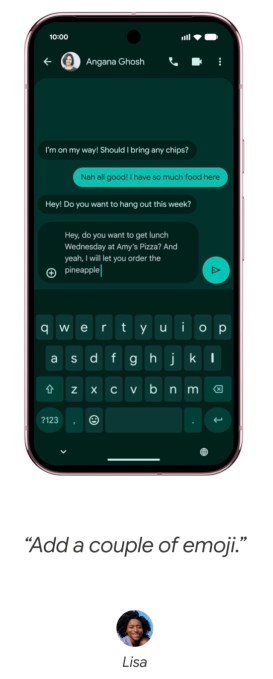

Furthermore, Gemini will integrate with Android’s Gboard keyboard. Google is leveraging Gemini’s multimodal capabilities by introducing a feature called Rambler within Gboard, akin to those found in other AI-driven dictation applications. This feature will allow users to express themselves naturally, transcribe their speech, and refine it by eliminating filler phrases.

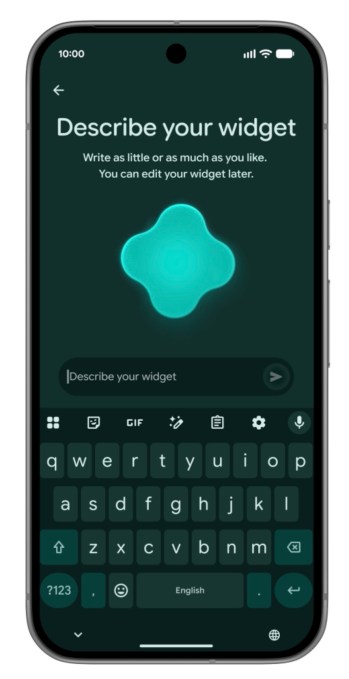

Vibe-coding applications are gaining momentum, and Google aims to offer Android users an experience of this innovation as well.

The company is implementing a method for users to create Android widgets by articulating them using natural language. For instance, users can generate a meal-planning widget with query phrases like, “Recommend three high-protein meal prep recipes weekly.”

The concept of widget creation is not new to Gemini. Notably, the hardware start-up Nothing also launched a comparable tool the previous year.

Google indicated that Gemini Intelligence will adhere to the company’s Material 3 expressive design standards in its functionalities.

The company stated that these AI-driven features will initially launch on the latest Samsung Galaxy and Google Pixel devices this summer and will subsequently be accessible on other Android devices later this year.

When you purchase through links in our articles, we may earn a small commission. This doesn’t affect our editorial independence.