Cybersecurity experts have revealed a series of cyber assaults aimed at Apple users globally. The methods employed in these hacking operations have been named Coruna and DarkSword, with both government operatives and cybercriminals utilizing them to extract information from individuals’ iPhones and iPads.

It is uncommon to witness extensive hacks targeting users of iPhones and iPads. Over the past ten years, similar incidents have primarily involved attacks against Uyghur Muslims in China and individuals in Hong Kong.

Now, portions of these robust hacking instruments have surfaced online, potentially jeopardizing hundreds of millions of iPhones and iPads operating outdated software to data breaches.

We are dissecting the available information about these recent threats to iPhone and iPad security, as well as the protective measures you can undertake.

What are Coruna and DarkSword?

Coruna and DarkSword are two collections of sophisticated hacking toolkits that encompass various exploits capable of infiltrating iPhones and iPads to extract sensitive data, including messages, browsing history, geolocation, and cryptocurrency information.

The cybersecurity professionals who identified these toolkits report that Coruna’s exploits can compromise iPhones and iPads operating on iOS 13 through iOS 17.2.1, launched in December 2023.

Conversely, DarkSword includes exploits that can breach iPhones and iPads with the latest models using iOS 18.4 and 18.7, released in September 2025, according to Google cybersecurity analysts examining the code.

However, the danger posed by DarkSword is more pressing for the general populace. A portion of DarkSword has been leaked and uploaded to the code-sharing platform GitHub, allowing anyone to access the harmful code and initiate attacks against Apple users on older iOS versions.

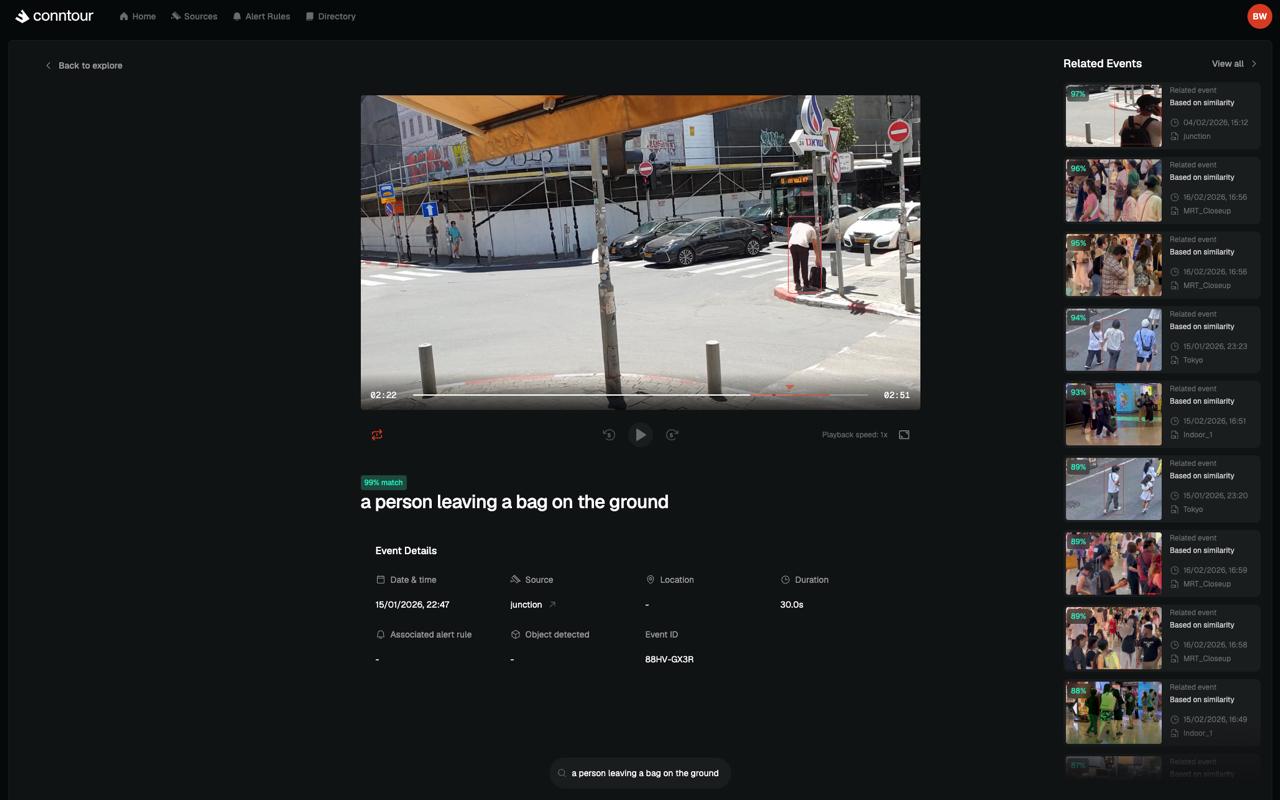

How do Coruna and DarkSword work?

These types of attacks are inherently indiscriminate and perilous, as they can ensnare any individual who visits a specific website hosting the harmful code.

Contact Us

Do you possess additional information regarding DarkSword, Coruna, or other governmental hacking and spyware instruments? From a personal device, you can securely reach out to Lorenzo Franceschi-Bicchierai on Signal at +1 917 257 1382, or via Telegram, Keybase, and Wire @lorenzofb, or through email.

In some instances, victims can be compromised merely by accessing a legitimate website under the management of malicious hackers.

Upon initial infection, Coruna and DarkSword capitalize on several vulnerabilities within iOS, enabling hackers to gain near-total control over the target device, consequently allowing them to extract the user’s private information. This data is then transmitted to a web server managed by the hackers.

At least some components of the Coruna toolkit, as previously reported by TechCrunch, were initially created by Trenchant, a hacking and spyware division within the U.S. defense contractor L3Harris, which sells exploits to the U.S. government and its leading allies.

Kaspersky has also associated two exploits in Coruna’s toolkit with Operation Triangulation, a complex and likely government-led cyber operation allegedly conducted against Russian iPhone users.

After Trenchant created Coruna — the specifics remain unclear — these exploits seemingly reached Russian spies and Chinese cybercriminals, potentially through one or several intermediaries selling exploits on the dark web.

Coruna’s trajectory exemplifies once again that formidable hacking tools, even those generated for the U.S. under strict confidentiality protocols, can leak and spread uncontrollably.

An instance of this occurred in 2017 when an exploit devised by the U.S. National Security Agency, capable of remotely infiltrating Windows computers globally, leaked online. The same exploit was later utilized in the destructive WannaCry ransomware assault, which indiscriminately hacked hundreds of thousands of computers worldwide.

In DarkSword’s case, researchers have tracked attacks targeting users in China, Malaysia, Turkey, Saudi Arabia, and Ukraine. It remains uncertain who originally crafted DarkSword, its transfer to various hacking factions, or the manner of its leak online.

It is unclear who disseminated and uploaded the tools to GitHub, or their motives.

The hacking instruments, which TechCrunch has reviewed, are composed in web languages HTML and JavaScript, allowing them to be relatively simple to configure and self-host by anyone wishing to conduct malicious attacks. (TechCrunch is refraining from linking to GitHub as the tools can be employed in harmful attacks.) Researchers on X have already attempted the leaked tools by hacking their own Apple devices with vulnerable versions of the company’s software.

DarkSword is now described as “essentially plug-and-play,” as articulated by Justin Albrecht, principal researcher at the mobile security firm Lookout, to TechCrunch.

GitHub informed TechCrunch that it has not removed the leaked code but will retain it for security research purposes.

“GitHub’s Acceptable Use Policies prohibit posting content that directly supports unlawful active attacks or malware campaigns causing technical harm,” Jesse Geraci, GitHub’s online safety counsel, informed TechCrunch. “However, we do not prohibit the posting of source code that could be used to develop malware or exploits, as the dissemination of such source code has educational merit and ultimately benefits the security community.”

Is my iPhone or iPad vulnerable to DarkSword?

If your iPhone or iPad is outdated, you should strongly consider updating it without delay.

Apple has advised TechCrunch that users operating on the latest versions of iOS 15 through iOS 26 are already shielded.

According to iVerify: “We highly recommend updating to iOS 18.7.6 or iOS 26.3.1. This will mitigate all vulnerabilities exploited in these attack vectors.”

Apple’s own data indicates that nearly one in three iPhone and iPad users are still not utilizing the latest iOS 26 software. This suggests that potentially hundreds of millions of devices remain susceptible to these hacking instruments, as Apple estimates over 2.5 billion active devices globally.

What if I can’t or don’t want to upgrade to iOS 26?

Apple also mentioned that devices using Lockdown Mode, an optional enhanced security feature first implemented in iOS 16, can also obstruct these particular attacks.

Lockdown Mode is advantageous for journalists, dissidents, human rights advocates, and anyone who believes they may be targeted based on their identity or occupation.

Although Lockdown Mode is not flawless, there is no public evidence suggesting that hackers have been able to bypass its protective measures thus far. (We inquired with Apple about whether that assertion still holds true, and will update if we receive a response.) Lockdown Mode has reportedly thwarted at least one attempt to install spyware on a human rights defender’s device.