On Tuesday morning at the Android Show: I/O Edition 2026, Google introduced Rambler, an innovative AI-driven voice dictation feature for Gboard — its popular Android keyboard application. This debut places Google in direct rivalry with emerging AI dictation applications like Wispr Flow and Typeless, which have been gaining popularity on both desktop and mobile platforms recently, with most not yet solidified in the Android ecosystem.

Similar to other dictation tools, Rambler eliminates filler phrases such as “ums” and “ahs.” It also comprehends corrections made mid-sentence, as in, “I am going to meet you on Wednesday at our usual coffee shop at 3 p.m. … um, 2 p.m.”

Google revealed that it employs Gemini-based multilingual models that facilitate code switching. Code switching allows users to transition between languages within a single sentence — for example, from English to Hindi — with Rambler maintaining context throughout. This feature mirrors how many multilingual speakers naturally converse, whereas most Western dictation applications have been slow to adapt.

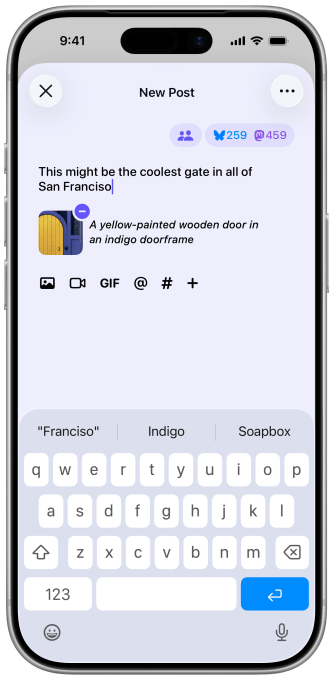

The corporation stated that Gboard will visibly inform users when the Rambler feature is activated. It does not retain any audio recordings and solely utilizes the audio to transcribe spoken words. Google emphasized during the presentation that, since users can utilize the Rambler functionality across all applications, it can be viewed as “reinventing the keyboard.”

Loading the player…

Regarding privacy, Ben Greenwood, director of Android Core Experiences, articulated that Google combines on-device and cloud processing and has “significantly invested over many years” to ensure features are “safe and private” — a strategic note for users comparing Rambler against third-party dictation solutions that may manage data differently.

Recently, a variety of dictation applications — Wispr Flow, Willow, Superwhisper, Monologue, Handy, and Typeless — have emerged. However, until now, much of this development has occurred on desktop and iOS platforms, leaving Android relatively neglected. Last month, Google itself launched AI Edge Eloquent, an offline-first dictation application powered by its on-device Gemma AI models, on iOS.

Rambler represents Google’s most pronounced move to bridge this divide. Initially, these features will be exclusive to Samsung Galaxy and Google Pixel phones for a summer rollout, but they will eventually be available on other Android devices. The primary advantage here is distribution: Gboard is the default keyboard for a vast number of Android users globally, which means Rambler is installed by default for hundreds of millions. When a platform provider enters a market on the operating-system level, standalone applications require significant incentives — improved accuracy, enhanced features, or stronger privacy assurances — to warrant a distinct download.

For dictation startups, the challenge is no longer if they can create a high-quality product — it’s whether they can produce something compelling enough for users to actively seek it out.

When you purchase through links in our articles, we may earn a small commission. This doesn’t affect our editorial independence.