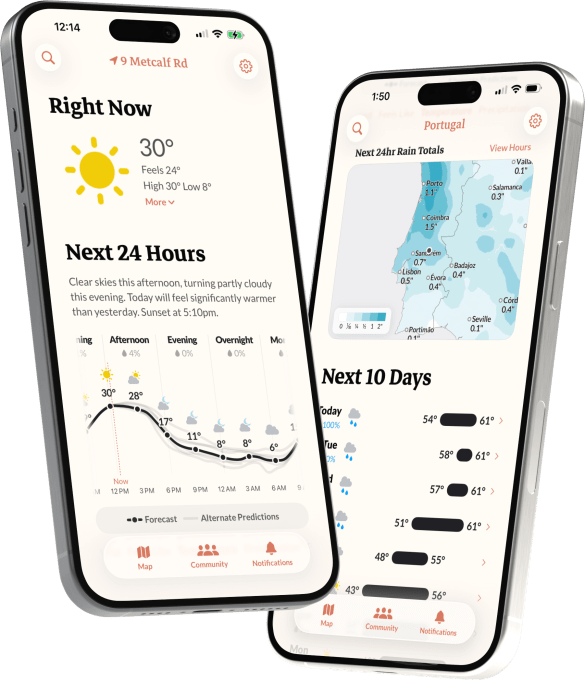

The developers behind Dark Sky, who transferred their well-liked weather application to Apple in March 2020, have returned with a fresh perspective on weather forecasting. The group recently revealed the introduction of their new application, Acme Weather, which they assert provides a superior and more dependable forecast than the one they offered at Dark Sky. Additionally, the app will feature an array of distinctive weather notifications, including enjoyable alerts for rainbows and stunning sunsets.

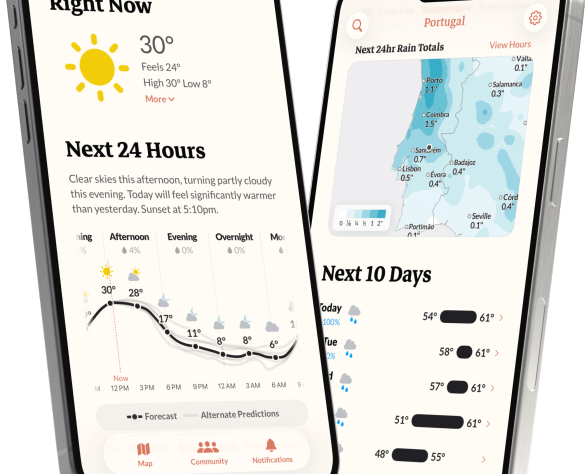

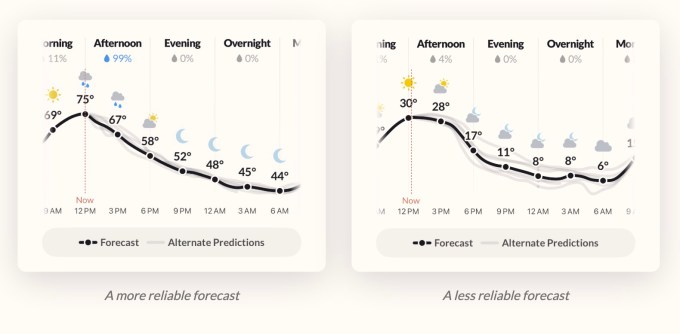

In contrast to standard weather applications, Acme Weather’s forecast is enhanced with various alternative predictions to enhance precision.

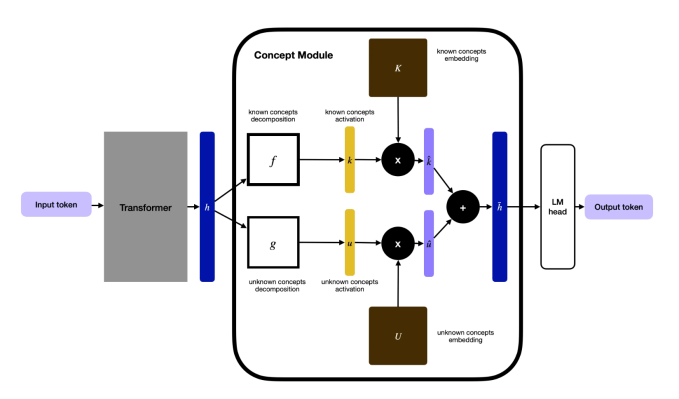

Adam Grossman, co-founder of Dark Sky, elaborates in a welcome blog entry that the app’s proprietary forecasts will utilize various numerical weather prediction models, satellite information, ground station data, and radar inputs, rendering its forecasts fairly trustworthy.

Additionally, the app will present supplementary forecast lines illustrating other potential outcomes as gray lines displayed on its charts.

“Forecasts can frequently be inaccurate — it’s the weather, after all! It’s among the most challenging elements to forecast,” Grossman shared with TechCrunch in a phone conversation. “Moreover, our primary frustration with numerous weather apps is that you merely receive their best estimate, and you lack clarity on how confident they are.”

Understanding the alternatives enables individuals to prepare for significant events, he indicated.

“I find it particularly beneficial for winter storms, where, perhaps the storm begins in the morning and you’ll experience snow, but there’s also a chance it may be delayed until later in the afternoon — resulting in rain,” Gross explained. “Being capable of witnessing that directly on the timeline provides an intuitive understanding of whether all the models concur and you’re set for snow, or if some predict snow while others forecast rain,” he added.

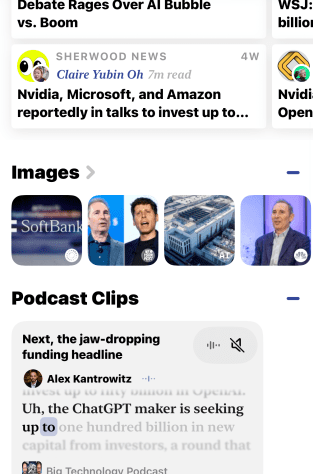

This kind of meteorological information might yield a valuable asset, not only for consumers but also for other developers.

At Dark Sky, the team had provided a weather API to developers for a fee. Following its acquisition by Apple, the group focused on creating WeatherKit, the toolkit for developers granting access to Apple’s weather data through subscription. Grossman mentioned that the team has not yet determined whether a developer API will be included in Acme Weather’s offerings.

Instead, Acme Weather is a consumer application priced at $25 a year, accompanied by a two-week free trial. This helps offset the expenses associated with integrating various weather models and resources, which can be quite costly.

“Most of our effort has been dedicated to constructing our own forecasts — effectively our own data provider. And this enables us to perform actions such as generating multiple forecasts … [and] develop any map we desire, rather than depending on a third-party map provider,” Grossman remarked.

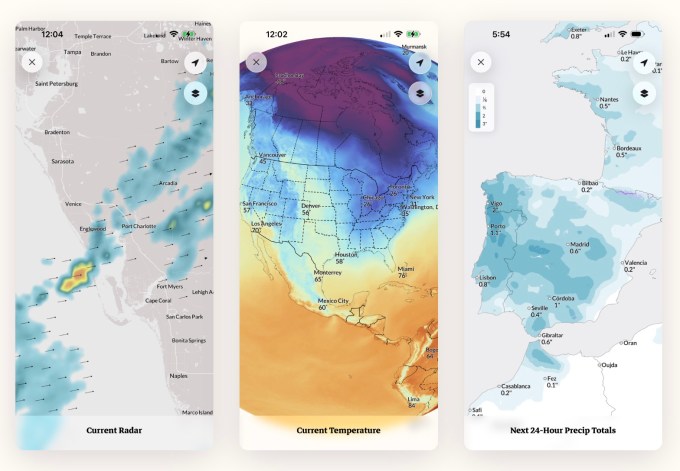

Upon launching, the app provides a variety of maps, including radar, lightning, rain and snow accumulations, along with wind, temperature, humidity, cloud coverage, and hurricane paths.

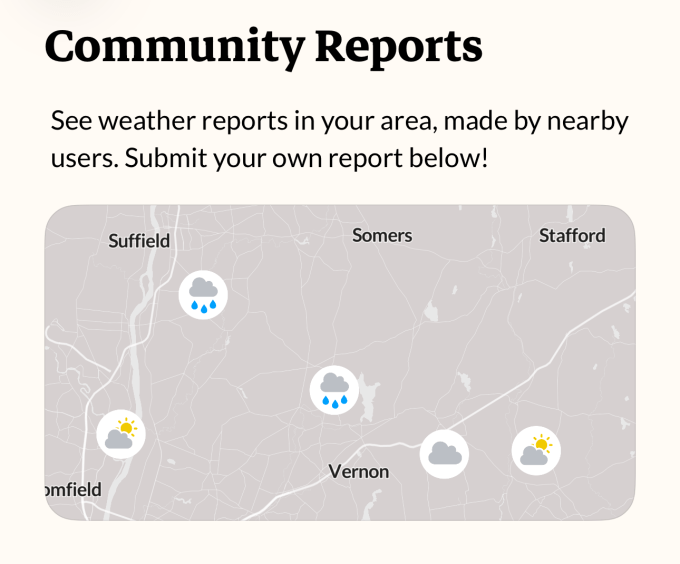

Another function, Community Reports, allows users to share insights about their present conditions to enhance the app’s real-time weather reporting.

While Dark Sky became a beloved weather application due to its remarkable accuracy in predicting when it would rain in your area, Acme Weather strives to enhance this and even introduce some playful elements.

The app features built-in alerts for standard phenomena such as rain, nearby lightning, community reports, government-issued extreme weather warnings, and more. It will also test alerts for forecasts like rainbow predictions or indications of a splendid sunset.

These features will be accessible in a dedicated “Acme Labs” segment of the app, and Grossman mentioned they would exercise caution with their estimations, due to the inherent challenges.

Users will also have the option to tailor their notifications to concentrate on weather occurrences that interest them, such as wind conditions or UV index, or the likelihood of rain within the next day.

The chance to experiment with new concepts is part of what motivated the team to return to indie app development, Grossman stated.

“I genuinely appreciate Apple … but as a large organization, it can be challenging to attempt unconventional, innovative ideas. When serving a billion users, mistakes carry a hefty price,” he conveyed to TechCrunch. “Long software development timelines exist, with numerous stakeholders involved; the prospect of testing various concepts is, I think, fascinating.”

Acme Weather is presently available on iOS. An Android version is in the works.

The team operates on a bootstrapped basis and consists of co-founders Josh Reyes and Dan Abrutyn, who also were part of Dark Sky. The compact team includes both former Dark Sky employees and recent hires.