It’s widely acknowledged that AI data centers have been putting pressure on the grid. However, Silicon Valley has been somewhat shielded from these issues due to high land and electricity costs that have prompted hyperscaler initiatives to relocate.

The tech aristocracy might soon experience the impacts of the power shortage, however. The Bay Area’s leisure destination, Lake Tahoe, has less than a year to secure a new energy provider.

By May 2027, the agreement between Liberty Utilities and NV Energy will conclude. NV Energy’s electricity will be redirected to other areas in Nevada, where the data center industry has been flourishing.

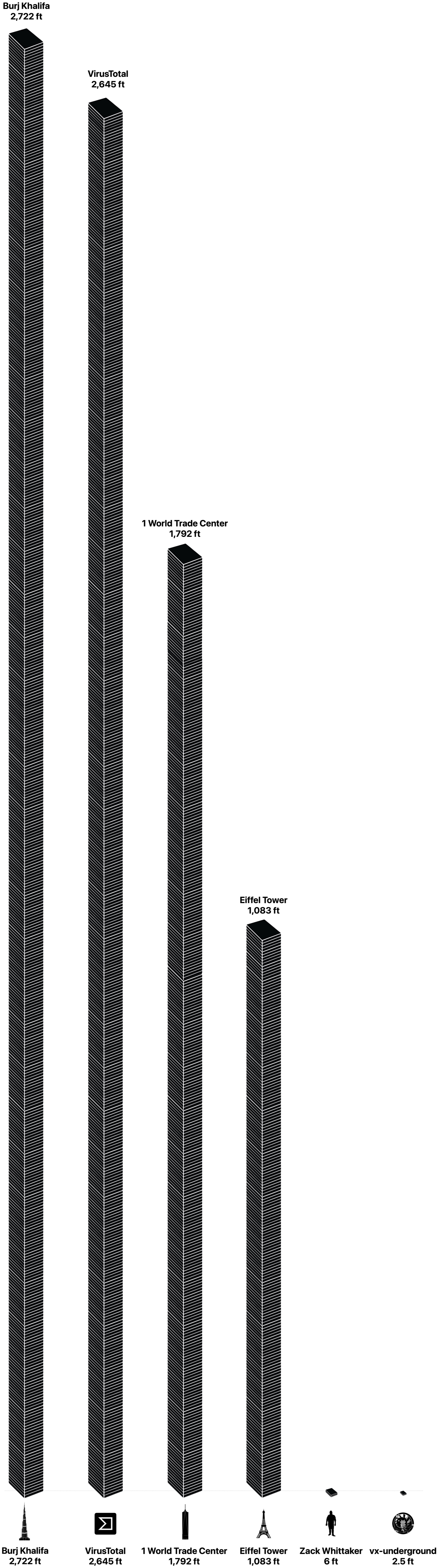

Both Liberty Utilities and NV Energy have indicated that this phase-out has been in the works for a long time, and NV Energy claims that data centers are not responsible. Yet, it is difficult to argue that they do not contribute. NV Energy alone has more than 22 gigawatts of load requests, which, as highlighted by a Bloomberg report, exceeds 40 times Lake Tahoe’s peak usage.

If data centers were not a factor, it’s plausible to envision a scenario where Liberty Utilities and NV Energy extend their contract. However, with data center clients prepared to pay whatever is necessary for electricity, it was unavoidable that traditional consumers in Lake Tahoe would be left without power.

The timing is particularly unfortunate. The energy markets are currently challenging, strained by soaring demand and diminished supply further complicated by the previous administration’s confrontation with Iran.

Lake Tahoe’s situation is exacerbated by the reality that its power lines are more interconnected with Nevada’s grid than California’s. Consequently, the community must seek another electricity supplier from within NV Energy’s domain or beyond in the West.

Given that NV Energy has already placed data centers above the mountain community in priority, it is probable that Lake Tahoe residents — along with second-home owners — will need to locate an alternative regional energy producer.

That won’t be a simple task. Just one state away, in Utah, a county commission has recently authorized a 40,000-acre data center project projected to consume up to 9 gigawatts of electricity upon completion. Currently, the entire state of Utah uses about 4 gigawatts. Demand on this scale is almost assured to inflate prices across the region.

The combination of these factors suggests that Lake Tahoe will likely incur higher electricity costs next year compared to today. Local residents will bear the brunt of this increase, but second homeowners in the area, many from Silicon Valley, may also feel the financial strain.

The irony of the AI energy crisis is that those most affected have had minimal influence over the technology or its deployment. Lake Tahoe’s energy situation indicates that this dynamic is beginning to shift, though probably not significantly enough to effect real change.

When you purchase through links in our articles, we may earn a small commission. This doesn’t affect our editorial independence.